Critical evaluation of information and data is a crucial element of information literacy and inquiry learning. It is a complex, higher order cognitive process, dependent on understanding the use of primary and secondary sources, the nature of knowledge and the construction of an argument supported by evidence. As such, it is highly discipline specific and contextual. Thus, it might be expected that different subject areas in the Australian Curriculum would treat critical evaluation differently. In this post, I present my analysis of critical evaluation of information and data in the Australian Curriculum in relation to:

- the placement of critical evaluation in particular subjects and year levels, and

- the criteria used to evaluate information and data.

The subject areas of Science, History, Geography, Economics and Business and Civics and Citizenship all include an inquiry skills strand that mentions critical evaluation of information and data. The Critical and Creative Thinking and ICT general capabilities also address critical evaluation. However, as I have critiqued in a previous post, in analysing the Australian Curriculum both vertically (through the year levels F-12) and horizontally (across each year level) there are gaps, omissions and inconsistencies in the use of evaluation criteria. Some of these issues are due to subject-specific information practices, while others are due to lack of alignment.

In setting the scene, it is alarming to note the findings of recent research by Elena Forzani in the US:

In a study of 1,429 7th grade students from 40 districts across two northeastern states, Forzani found that fewer than 4 percent of students could correctly identify the author of an online information source, evaluate that author’s expertise and point of view, and make informed judgments about the overall reliability of the site they were reading.

Forty-four percent of students in the study did only one of those things correctly.

Girls performed better than boys across the board, and by statistically significant margins when it came to identifying an author and evaluating an author’s point of view.

More affluent students (who were not eligible for free or reduced-price lunch) performed significantly better than their peers on three of the four dimensions of “critical evaluation.

I’ll return to Forzani’s research soon, but first, let’s look at the evaluation criteria used in the Australian curriculum in Science, History, Geography, Economics and Business, Civics and Citizenship, CCT and ICT.

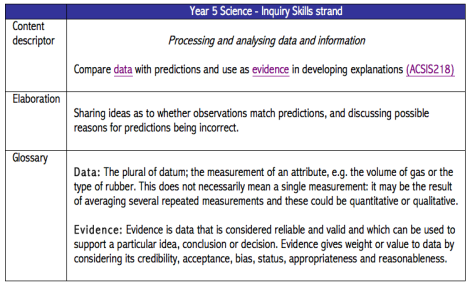

The content descriptors in the Australian Curriculum are the upper level information (i.e. the information that is provided on the webpage without clicking and drilling down to lower levels of the webpage). Elaboration of the content descriptions are provided via a hyperlink, and within the content descriptors sometimes terms are hyperlinked to a glossary. For example, Table 1 presents content descriptor ACSIS218 – Year 5 Science, while Table 2 presents ACHHS171 – Year 9 History:

In the Science example, the evaluation criteria are validity, reliability, credibility, acceptance, bias, status, appropriateness and reasonableness, whereas in the History example, the criteria are reliability and usefulness. None of these terms are explained in a Glossary. By contrast, in the Senior Science curriculum some terms are explained, for instance, in Senior Biology Unit 1 (ACSBL003) the glossary defines validity and reliability thus:

Reliability: Reliability is the degree to which an assessment instrument or protocol consistently and repeatedly measures an attribute achieving similar results for the same population.

Validity: The extent to which tests measure what was intended; the extent to which data, inferences and actions produced from tests and other processes are accurate.

In Forzani’s research, Year 7 students were asked to read and evaluate a science secondary source for ‘reliability’. This is problematic in itself, as in science the term has a very specific meaning when applied in relation to data and the conduct of the experimental method. When applied to the experimental method, reliability is defined as above in the Senior Science glossary. However, in the evaluation of secondary sources (for instance journal articles, websites or news stories), reliability seems to be used two distinct ways:

- to evaluate research reports for the way they have addressed reliability in their method

- to evaluate the overall credibility of a source

In Forzani’s research, it seems that the latter meaning was used. The use of the same terms to mean different ways of evaluating data, method and information is potentially confusing for students, however this is not problematised, either in Forzani’s research or in the Australian Curriculum.

In Table 3 I have listed all the evaluation criteria mentioned in the Australian Curriculum. In this table I have not specified which subject area or general capability the criteria have come from, however I have noted when the term is defined in the Glossary.

In Tables 4, 5 and 6 I show a vertical and horizontal breakdown of where the evaluation criteria are used in the subject areas and general capabilities. The dot points portray the criterion used in the content descriptor and where criterion is used in the elaborations and/or glossary is it emphasised in italics. I have coded frequently used criteria in colour: relevancy in green, usefulness in blue, reliability in red and validity in purple.

The evaluation criteria relate to two main aspects of inquiry learning:

1) the relevance and usefulness of the source to the inquiry, and

2) the qualities of the source.

These aspects are displayed in Tables 5 and 6.

The tables illustrate the similarities in criteria used across a number of subject areas. However, due to the lack of a glossary explaining the use of the terms in each subject area, it is not possible to ascertain the way a subject area uses the term. As I argue above, given that the nature of knowledge, evidence, argument and primary and secondary sources are distinct in different disciplines it is likely that criteria such as reliability and validity are judged differently. Furthermore, when the curriculum is analysed horizontally, a lack of alignment can be seen. For instance, in Civics and Citizenship, students are expected to judge facts vs. opinion in Year 3, whereas in History they don’t do this until Year 7.

What is missing from the evaluation criteria? In a recent professional development session I asked teacher-librarians to nominate the criteria they used with their students to evaluate information. Interestingly, many of the checklists they used included currency, however currency is not a criterion that is mentioned explicitly anywhere in the Australian Curriculum.

As I have noted elsewhere (Lupton, 2012, 2014), the Australian Curriculum is necessarily a flawed document. Due to the nature of its development it is not able to comprehensively represent subject specific information literacy and inquiry learning. It is important to incorporate the criteria listed in the curriculum at the appropriate year level, however, due to the limited information available in the curriculum documents, it is up to teachers and teacher-librarians to flesh out and define the criteria, plus incorporate aspects that have been omitted. Feel free to respond to this post describing your own practice in this area.

Lupton, Mandy (2014) Inquiry skills in the Australian Curriculum v6 : a bird’s-eye view. Access, 28(4), pp. 8-29.

Lupton, Mandy (2012) Inquiry skills in the Australian curriculum. Access, 26(2), pp. 12-18.

Tbanks Mandy,

Bill. ________________________________

Congratulations, Mandy. This is important and useful data confirming our daily lived experience in secondary schools. You have identified markers which need to be taken on as appropriate in all subjects and year levels so that by variation of experience students gain a full range of information competencies as they progress – as previously promoted by you and Christine Bruce and others. At my school we work to distinguish between fact and opinion within our research classes, and particularly to give students the experience of immersion in properly referenced online database material. We explicitly talk about why particular sources have been recommended for particular learning purposes. The criteria presented here are a very useful listing and beyond what we currently address, so I look forward to taking these into our future work with teachers in curriculum design. Anne

Dear Mandy, I also found your comments on critical literacy interesting and relevant. Can you please recommend further recent research regarding student inability to be critical with information? thanks, Margo

Margo, here are some recent studies looking at school students (to get behind the paywall you’ll need to use a subscription database such as one provided by your state library or university library):

Promoting secondary school students’ evaluation of source features of multiple documents

http://www.sciencedirect.com/science/article/pii/S0361476X13000131

Debating credibility: the shaping of information literacies in upper secondary school”

http://www.emeraldinsight.com/doi/abs/10.1108/00220411111145043

How high-school students find and evaluate scientific information: A basis for information literacy skills development

http://www.sciencedirect.com/science/article/pii/S0740818808001382

Here are a couple of a freely available articles:

Youth and Digital Media: From Credibility to Information Quality

http://papers.ssrn.com/sol3/papers.cfm?abstract_id=%202005272

Information skills and critical literacy: Where are our digikids at with online searching and are their teachers helping?

http://www.ascilite.org.au/ajet/ajet27/ladbrook.html

Thanks Mandy, much appreciated!

Pingback: Secondary Students and Information Literacy – Lisa McKenzie's Reflection Journal·